When Big Legal Data Isn’t Big Enough: Limitations in Legal Data Analytics [Report]

Hong Kong (PRWEB) September 26, 2016 -- The mass harvesting and storage of court records and other legal data provides an opportunity for corporate litigants and legal counsel to complement decision making with legal data analytics. But without the use of proper statistical methods, the analysis of legal data can be invalid or misleading.

A new report from SettlementAnalytics™ provides legal professionals with an introduction to appropriate analytical methods and online tools to facilitate a better understanding of ‘Big Legal Data’ and how it should be used. The report introduces key concepts at an introductory level and refers to applications which illustrate how to evaluate the statistical merit of legal data.

The central problem is that legal professionals do not analyze quantitative data merely to observe historical data facts, but rather in an effort to draw a meaningful inference about the present, and to make decisions. Although it is widely understood, it is sometimes forgotten that past data is not a reliable metric for present insight — the past is only a sample of what could happen and often it is a very imperfect one.

The bottom line is that not all samples of legal data contain sufficient information to be usefully applied to a decision. By the time big data sets are filtered down to the type of matter that is relevant, sample sizes may be too small and measurements may be exposed to potentially large sampling errors. If Big Data becomes ‘small data', it could be quite useless or worse.

"To be of value in real world decisions, legal data analytics must be able to distinguish between the inherent randomness in historical data samples and statistically meaningful legal track records," said Robert Parnell, author of the report and founder of SettlementAnalytics. "This is the stuff of inferential statistics."

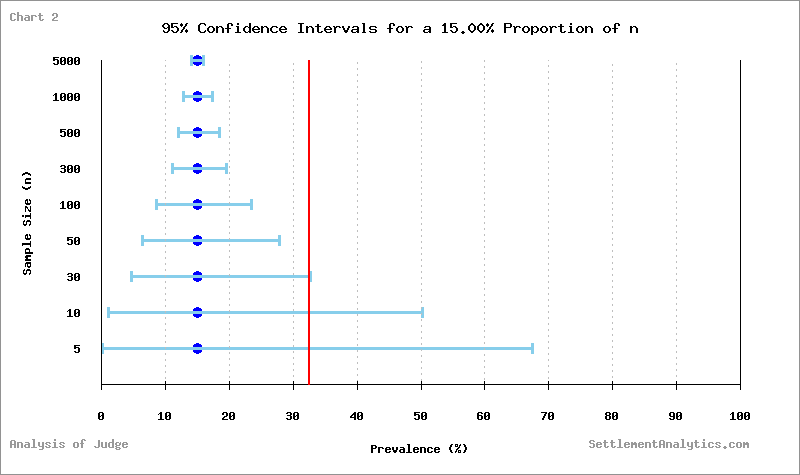

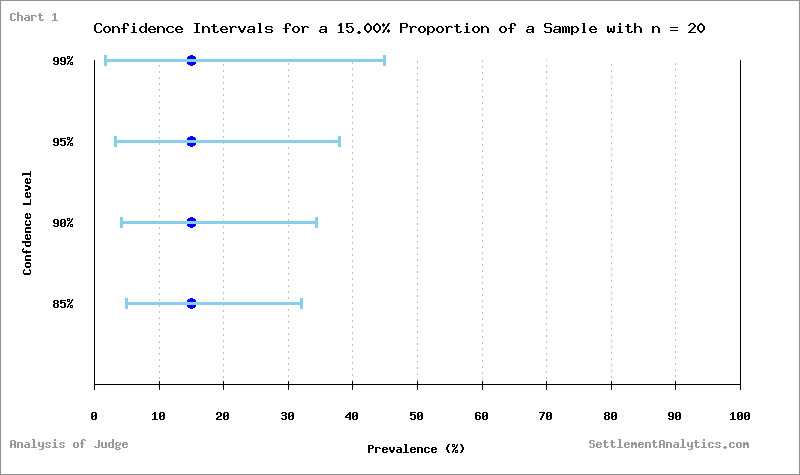

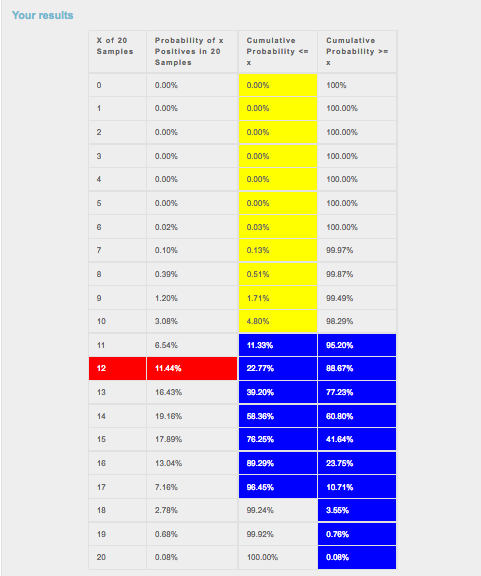

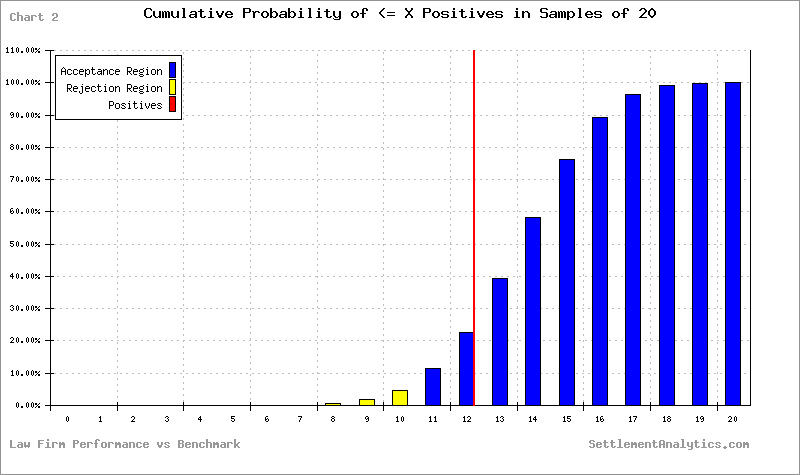

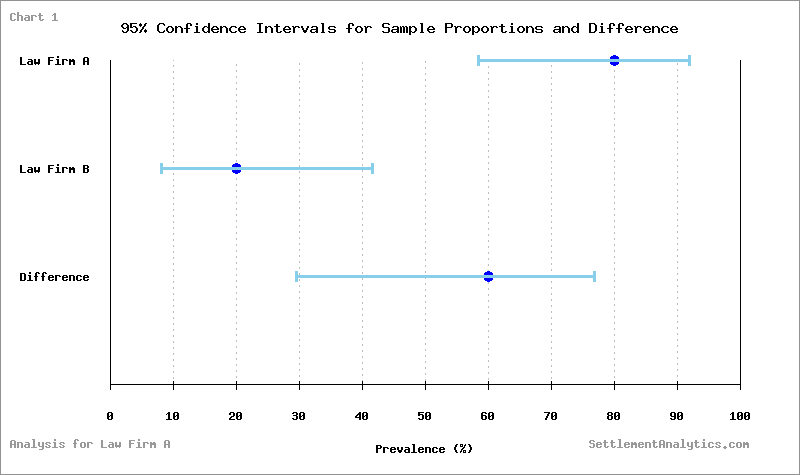

The report provides a mostly non-technical introduction to the subject, which should be accessible to legal professionals without a quantitative background. Example analyses are included, which show how to quantify uncertainty in the measurement of judicial decisions, and how to determine if a law firm’s track record is statistically significant relative to its peer group. The results of statistical analyses are presented in charts and tables.

The report graphically illustrates an important point: although the volume of data available to legal professionals will sometimes be sufficient to distinguish an informational signal from the random noise in data, this will not always be the case. Using the methods outlined in this report, litigants and counsel will be able to interrogate the statistical validity of court data and evaluate the significance of quantitative legal metrics.

Data analytics is more complex than is generally acknowledged. Although it is appealing to view data analysis as a simple tool, there is a danger of neglecting the science in what is basically data science. The consequences of this can be to undermine sound decision making. To draw an analogy, legal data analytics without inferential statistics is like legal argument without case law or rules of precedent — it lacks a meaningful point of reference and authority.

Where data analytics is used for decision making, it must include an allowance for the role of inferential statistics – only then can its informational content be properly evaluated. By using inferential statistical methods and paying careful attention to the complexities of data analysis, corporate litigants and Big Law can benefit from this new frontier in Big Data.

About the Author

Robert Parnell is the president and CEO of SettlementAnalytics, which he established in 2011 to bring the application of quantitative and scientific methods to the economic analysis of litigation and settlement bargaining strategy. Prior to founding SettlementAnalytics, Mr. Parnell had over 18-years of experience in finance and institutional investment management in analytic, risk management, portfolio management and executive roles. Mr. Parnell holds a BSc in physics from King’s College London, an MBA from the Ivey Business School and a law degree from the University of London International Programmes. Mr. Parnell is also a holder of the right to use the Chartered Financial Analyst® designation.

About SettlementAnalytics

SettlementAnalytics™ is a legal-economic research and software development firm focused on the application of game theory and model-based analytics to the strategic analysis of litigation and settlement decision making. Software applications developed by SettlementAnalytics combine canonical and proprietary game theory models of legal conflict, which also integrate ideas from information economics, financial economics, bargaining theory and Monte Carlo simulation. The firm's flagship software service – OptiSettle™ – is the first commercially available software application to provide a game theory analytics platform for legal dispute.

SettlementAnalytics – The Science of Litigation™

For more information please contact:

Robert Parnell, CFA, LLB

President and CEO

Email: info(at)settlementanalytics(dot)com

settlementanalytics.com

Tel: +44 (0) 203 287 9443

Tel: +852 3589 3377

SettlementAnalytics

Macro Research Associates Limited

1301 Bank of America Tower

12 Harcourt Road, Suite 1479

Central, Hong Kong

Robert Parnell, CFA, LLB, SettlementAnalytics, http://SettlementAnalytics.com, +852 35893377, [email protected]

Share this article