Kadho Releases KidSense, Multiple Languages Speech Recognition Engine for Kids

NEWPORT BEACH, Calif. (PRWEB) November 17, 2017 -- Tech startup Kadho has introduced a new speech recognition model exclusively designed for children from 4 to 12 years of age. The technology has advanced features which make it perform above notable engines such as IBM Watson, Microsoft Bing, and Nuance. These systems are designed and trained on adult data and as they strive to reach the highest benchmarks, they are not focusing on one of the most important categories: children. Kadho’s knowledge and expertise in neuroscience and artificial intelligence along with a focus on early childhood education supported the development of KidSense.

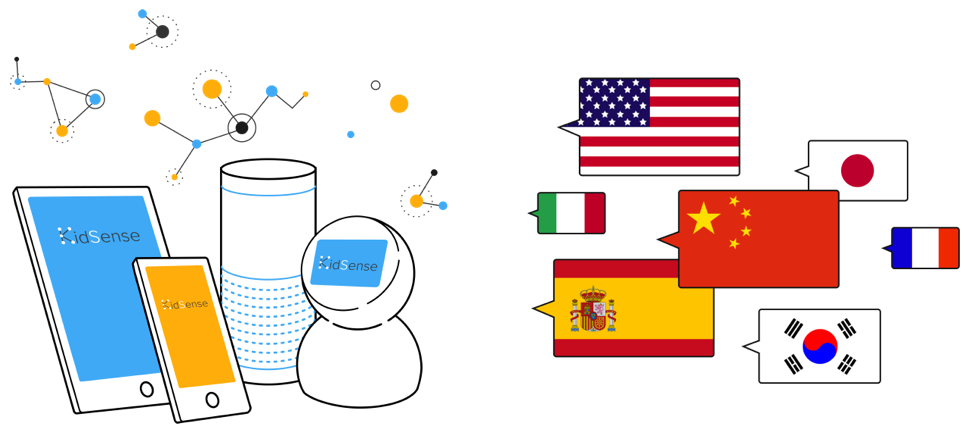

Kadho’s CEO, Kaveh Azartash, PhD stated that “Children grow up with technology, and technology contributes very effectively to a child’s growth. It is a pain to see the latest advances in mobile and communication technology such as Google Home, Amazon Echo or Siri fail badly to engage with children. Put simply; children are unable to speak to technology because none of these systems can decode how kids speak.” Dr. Azartash went on to add “We are thrilled to announce the launch of our unique speech recognition engine KidSense. We believe KidSense would duly help to fill the gap created by existent speech recognition modules through its exclusive kid-accent decoding capacity.” KidSense is built by collecting targeted data from native and non-native speakers of English, Mandarin, Korean, Japanese, Spanish & French. In line with the rollout plan, the first phase of KidSense supports English, Korean, and Mandarin. The company plans to build more data to support the top 10 languages in the world.

KidSense is built by a team of neuroscientists and artificial intelligence engineers who have made a revolutionary endeavor to integrate the fundamentals of language acquisition in early childhood with latest advances in artificial intelligence. Kidsense also provides dual-language speech recognition capabilities which make it one of a kind tool to provide a medium for language teaching products. The technology highlights the development of a novel neuroscience-based approach towards solving the problem of children communicating with technology.

The Chief Technology Officer at Kadho, Dr. Dhonam Pemba said “The real challenge in building a speech recognition model occurs when you don’t have hundreds of thousands of hours of speech data. We have developed a novel approach leveraging the language independent features of children’s speeches to bootstrap our neural networks that allowed us to build better quality acoustic models with less data than the leading tech giants.”

Kidsense currently provides services such as speech to text and speech/pronunciation evaluation through its cognitive engines. KidSense is built on integrating a number of language and acoustic models and has been validated through multiple partnerships. For more information, please visit http://www.kidsense.ai

About Kadho

Kadho is an Irvine, California based tech company which is poised to revolutionize the language learning process. The company has been developing educational technologies since 2014 and has operations in USA, China, Korea and Hong Kong. They aim to introduce a new learning method with science and immersive conversation to augment the language learning process for children. With the use of neuroscience and artificial intelligence, they have been able to create a dynamic product for children called Kidsense. The company is committed to helping children learn various world languages through its unique speech engine product. For more info please contact kadho(at)kadho(dot)com or visit http://www.kadho.com

Kadho Inc, Kadho Inc., http://www.kadhoSports.com, +1 714-477-3935, [email protected]

Share this article