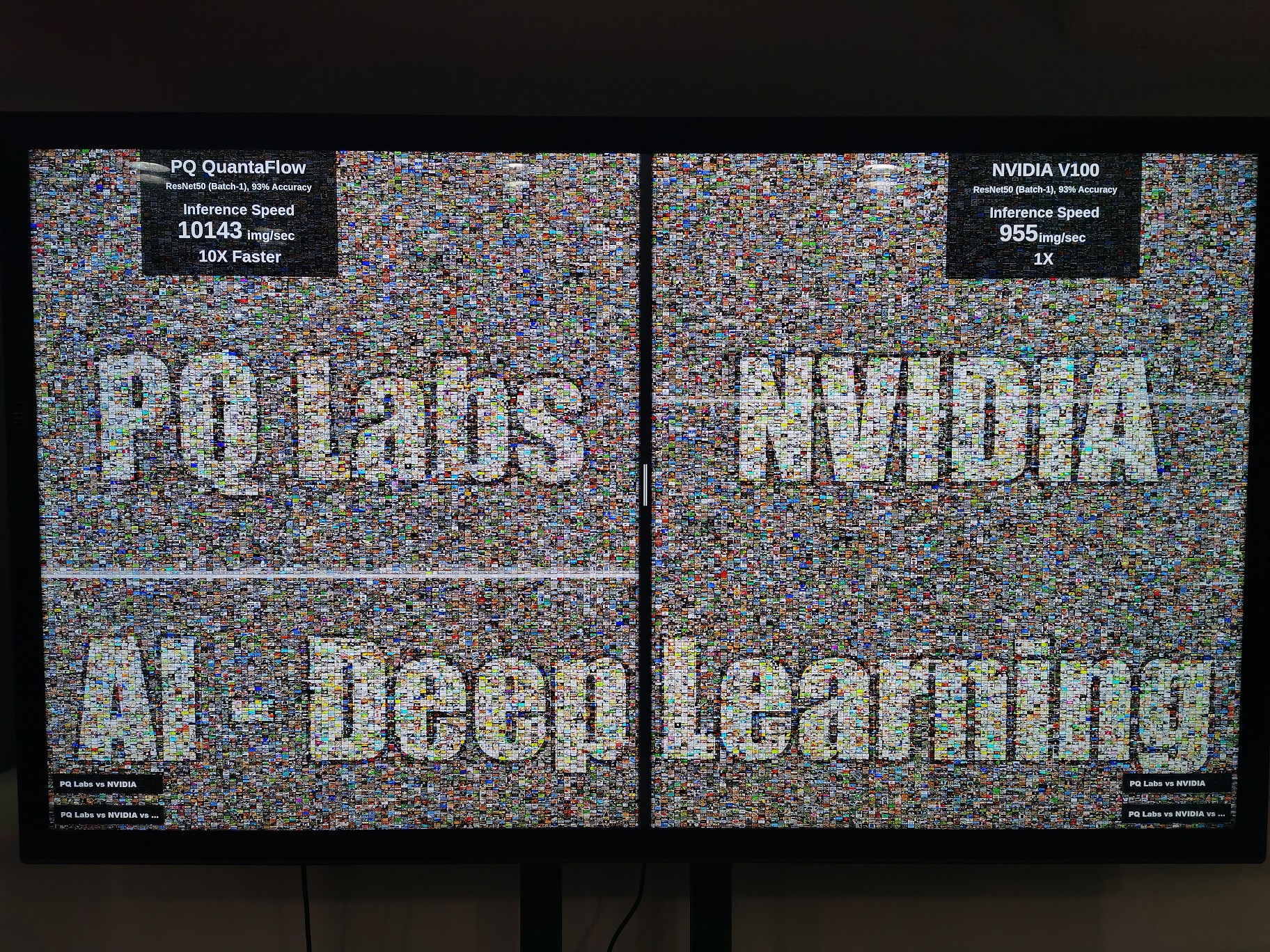

CES 2020, AI + Quantum Flow boosts Deep Learning speed 10x - 15x Faster - powered by pqlabs.ai

Does a mixture of AI and Quantum exist? The answer is in a superposition: "maybe". Tech startups are working on the unexplored area for superior AI performance

LAS VEGAS, Jan. 8, 2020 /PRNewswire-PRWeb/ -- PQ Labs Inc, a company in Fremont, CA specialized in developing innovative technologies, unveiled QuantaFlow AI architecture in CES 2020 (South Hall #25858). The new architecture includes a classical RISC-V processor, a QuantaFlow Generator and a QF Evolution Space. It is the first time for the industry to see such new architecture, which could change the future of AI and Deep Learning inference solutions.

"Quanta" is the plural of quantum. QuantaFlow AI SoC architecture is designed to simulate massive parallel transformation / evolution that is very similar to Quantum Computation.

Quantum Computing is based on continuous unitary transformation of qu-bits. A qu-bit (quantum bit) can represent different possible states (e.g. a cat being mathematically both live and dead at the same time). This makes quantum computing model great for massively paralleled computation. Like classical computing model, quantum computing also has 3 major procedures: Input, Process and Output. But unlike classical model, the process part of quantum computing is done by continuous transformation / Evolution other than step by step read-control-writeback of a classical Turing machine. There is no observation (read/writeback) until the final stage. The qu-bit evolves in multiverse, each representing one possible state in superposition.

In reality, quantum computers are still far far away from practical use. Even after Google's Quantum Supremacy, very little algorithms can be run and none of them have real-world applications. QRAM (quantum random access memory) only exists in theory. Scientists and engineers have no idea on how to build it yet. Qu-bits are fragile and easy to collapse, which need error-correction. This may not be an issue for a few small lab experiments with a few qu-bits. But the requirement goes exponentially as the number of qu-bits increase. For example, one billion error-correcting qubits is needed just to get 1,000 functional qubits to do something productive, according to the Nature Magazine.

In face of such tech difficulties, other approaches of quantum-like computation are being explored. For example, Canadian tech startup D-Wave announced its 5,000 qu-bits quantum annealer computer in 2019 (Googler's Quantum Supremacy computer only has 53 qu-bits). However, scientists from academics argue that D-Wave's computer is not "true quantum", but a quantum algorithm and quantum simulation. Nevertheless, for some specific problems, D-Wave's computer is still 100 million times faster than a classical computer running a classical algorithm, according Google's published research paper. D-Wave's "qu-bits" are quite stable and scales very well at the expense of interconnectivity. Despite the academic debate, D-Wave is the world's first "quantum computer" that solves actual problems such as drug molecule classification, ads optimization, map coloring, weather simulation, etc. However, the computer is huge and cost about $15 Millions each, thus lack of mass adoption.

There are other approaches trying to bridge the gaps between Quantum-style algorithm implementations into real silicon of Artificial Intelligence. QuantaFlow is the answer from PQ Labs, Inc.

QuantaFlow simulates a virtual transformation / evolution space for qf-bit registers. A classical single-core RISC-V processor is implemented to provide logical control, results observation retrieval, etc. The QuantaFlow Generator converts input data from low dimensional space to high dimensional space and then starts continuous transformation / evolution. The process is of minimum granularity, highly parallel in nature and asynchronous. By the end of the process information needs to be extracted from the transformation / evolution space by Bit Observer unit. Bit Observer measures the qf-bit registers in multiple time and space. In addition, Hot-Patching can be used to change the evolution path of qf-bits dynamically. When a more significant deformation for the evolution space are needed, the RISC-V processor will issue a warm-"reboot" to the evolution space. All these operations can be executed in a blink of time. With the help of these dynamic operations, QuantaFlow is possible to run all kinds of neural network models e.g. ResNet-50 (2015), MobileNet (2017), EfficientNet (2019), etc.) without speed degradation or hitting the "memory wall". GPUs and ASIC AI accelerators are usually suitable for some certain collection of neural network models (mostly compute-bound, e.g. ResNet), performance will degrade significantly for a different collection of network models, especially the newer generations (MobileNet 2017, EfficientNet 2019), because all of these newer models are all memory-bound.

QuantaFlow architecture is just a one-step further to explorer superior performance in AI deep learning inference. There are many devils in the details and innovation areas. For example, the design process does not follow the traditional workflow such as Verilog hardware description + EDA toolchains. Such traditional workflow is not able to explore a tremendously huge design space within a reasonable time and a reasonable amount of human resource. The traditional workflow will not be able to get good performance because the heuristic and annealing algorithms used in the toolchain can only reach local optimum result and is not able to optimize to the ultimate physical limit of silicon. QuantaFlow architecture design flow are accelerated by high level languages (instead of using Verilog) and implementation is optimized by in-house algorithms to extract maximum horsepower from silicon.

With all the above effort, QuantaFlow can achieve 10X speedup in ResNet-50 (batch=1, accuracy=93%, INT8) compared to Nvidia V100 in the same network configuration. For newer network models, there will be significantly higher speedup to be announced.

For more information please visit http://www.pqlabs.ai

SOURCE PQ Labs Inc

Share this article